How to Measure FLOP/s for Neural Networks Empirically? – Epoch

$ 19.99 · 4.6 (399) · In stock

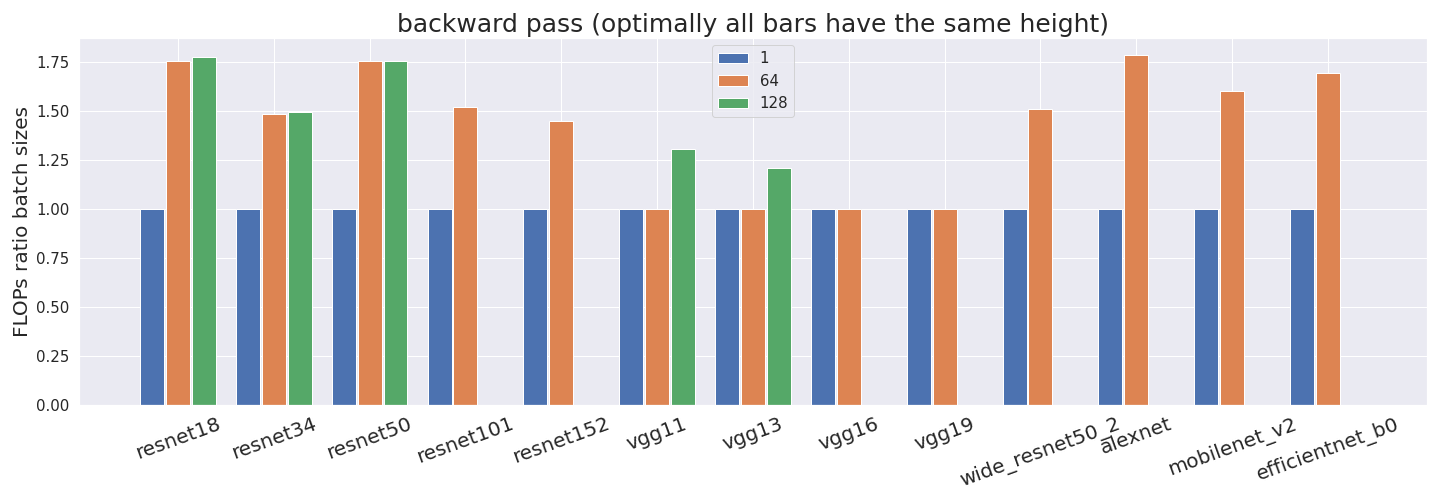

Computing the utilization rate for multiple Neural Network architectures.

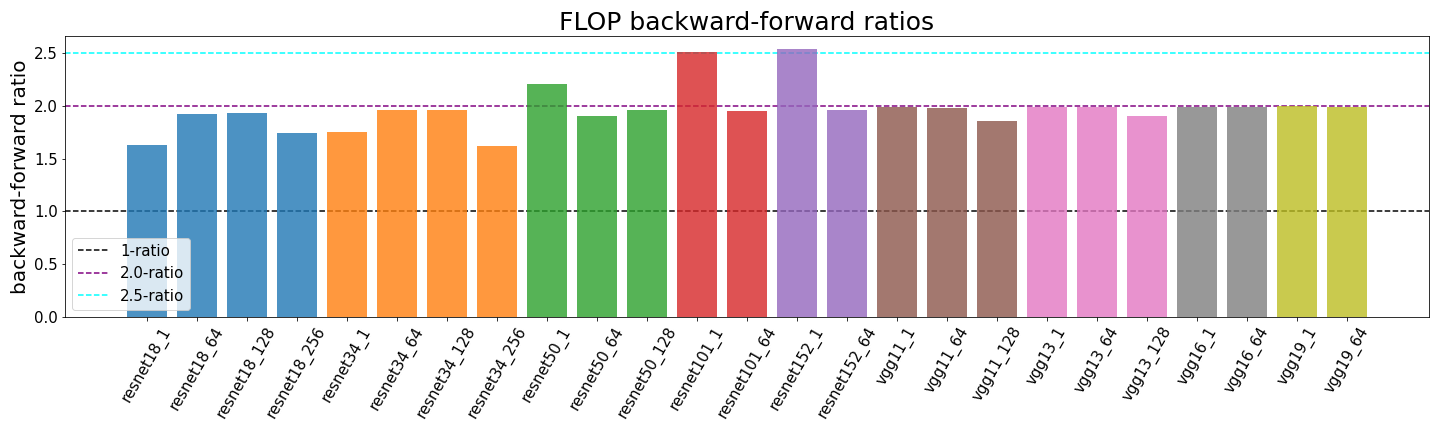

What's the Backward-Forward FLOP Ratio for Neural Networks? – Epoch

Applied Sciences, Free Full-Text

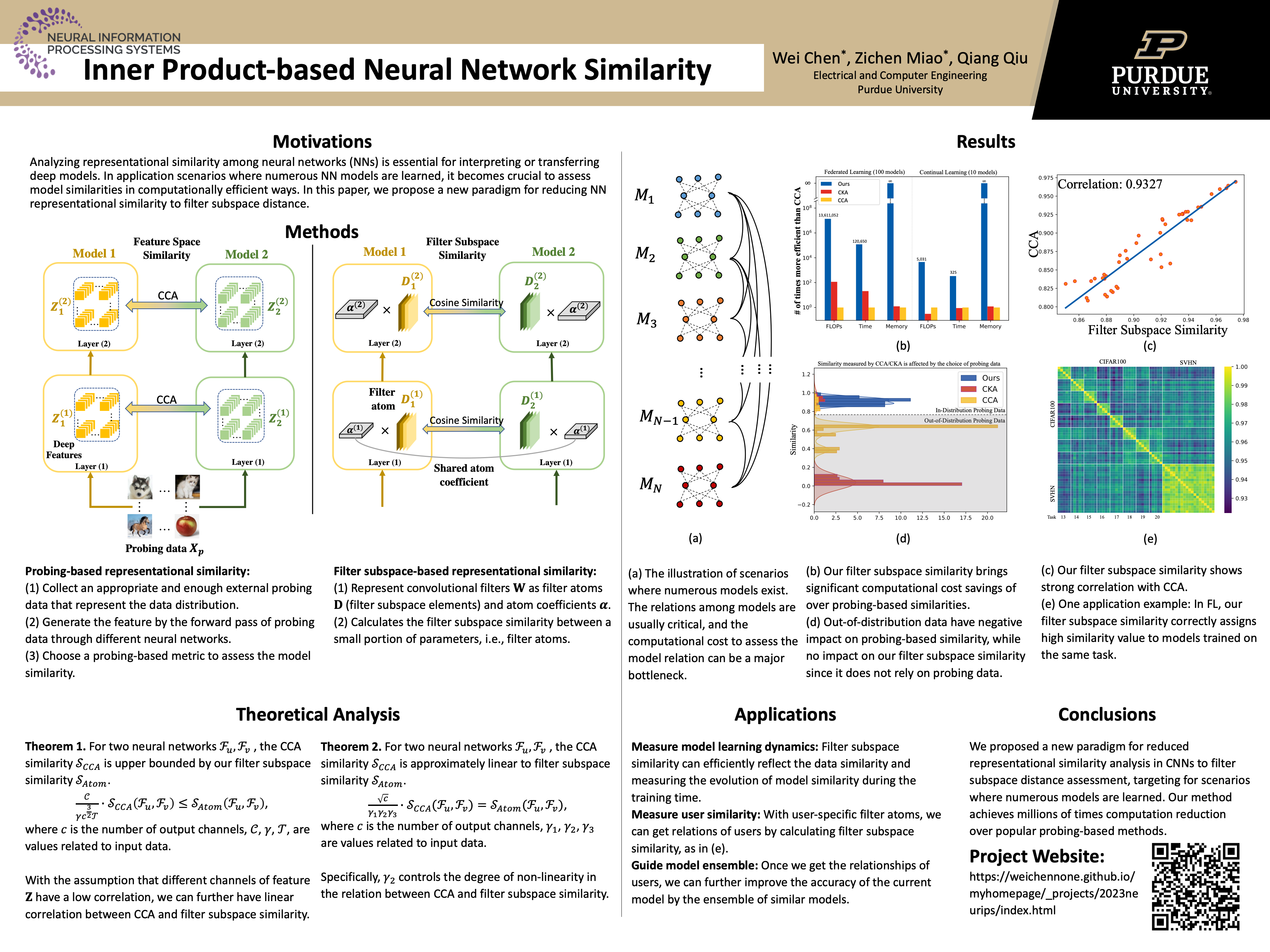

NeurIPS 2023

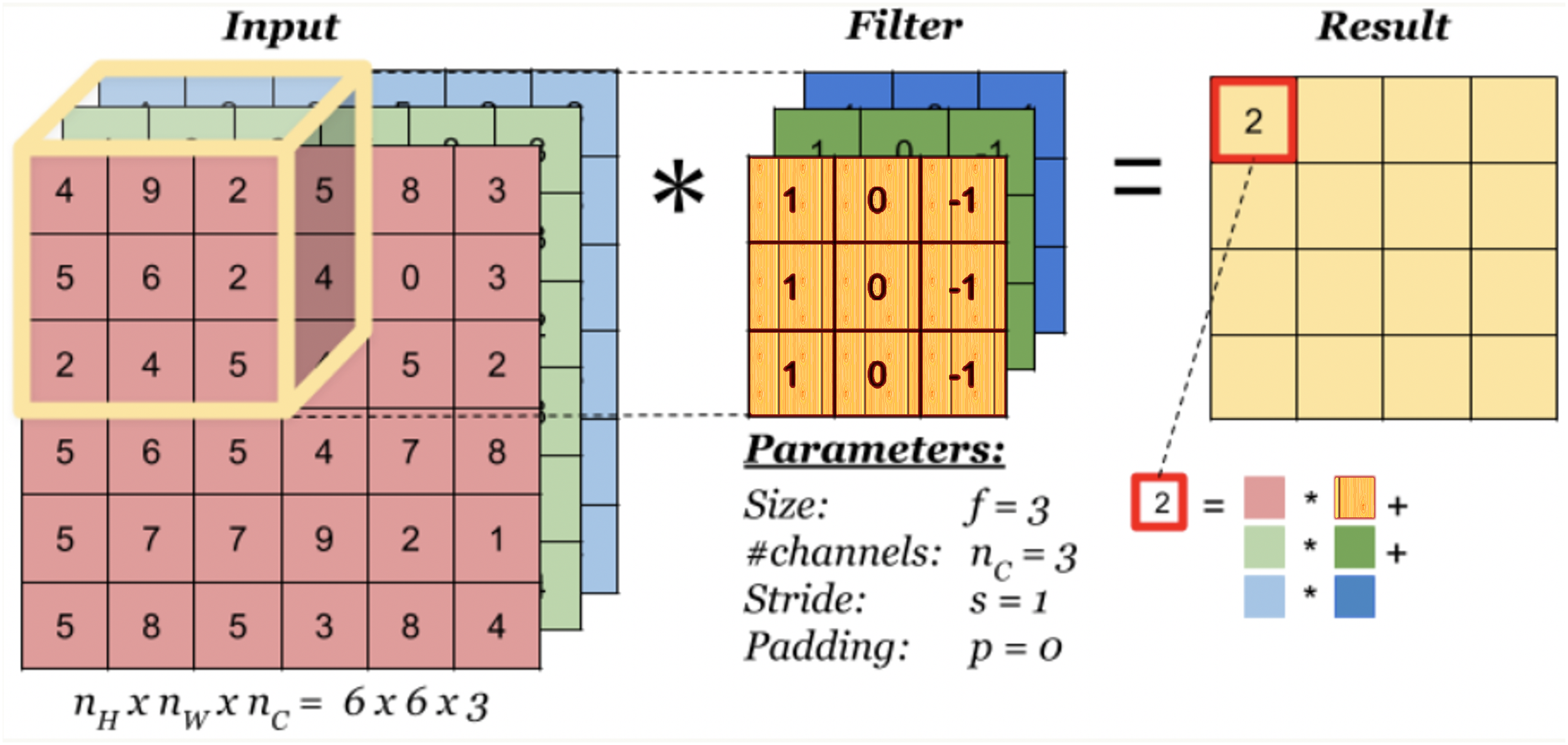

Multi-objective simulated annealing for hyper-parameter optimization in convolutional neural networks [PeerJ]

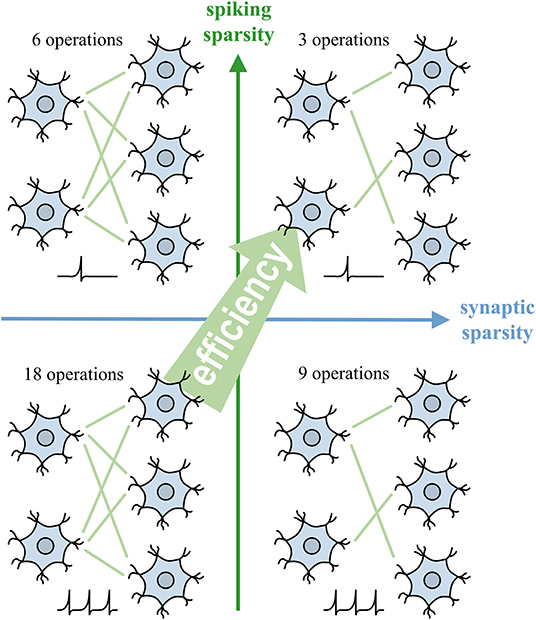

Frontiers Backpropagation With Sparsity Regularization for Spiking Neural Network Learning

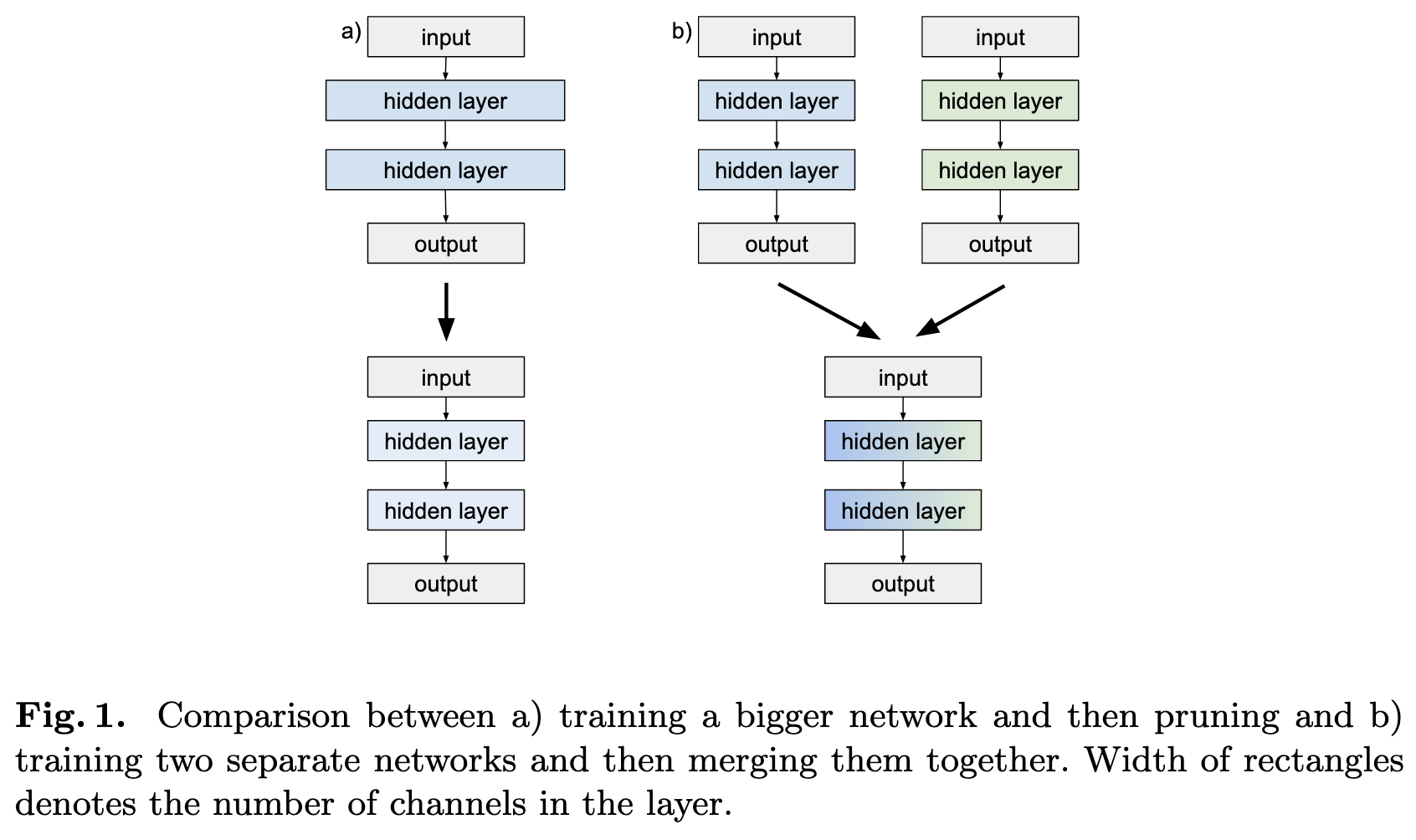

2022-4-24: Merging networks, Wall of MoE papers, Diverse models transfer better

Convolutional neural network-based respiration analysis of electrical activities of the diaphragm

Training error with respect to the number of epochs of gradient

When do Convolutional Neural Networks Stop Learning?

What can flatness teach us about why Neural Networks generalise?, by Chris Mingard

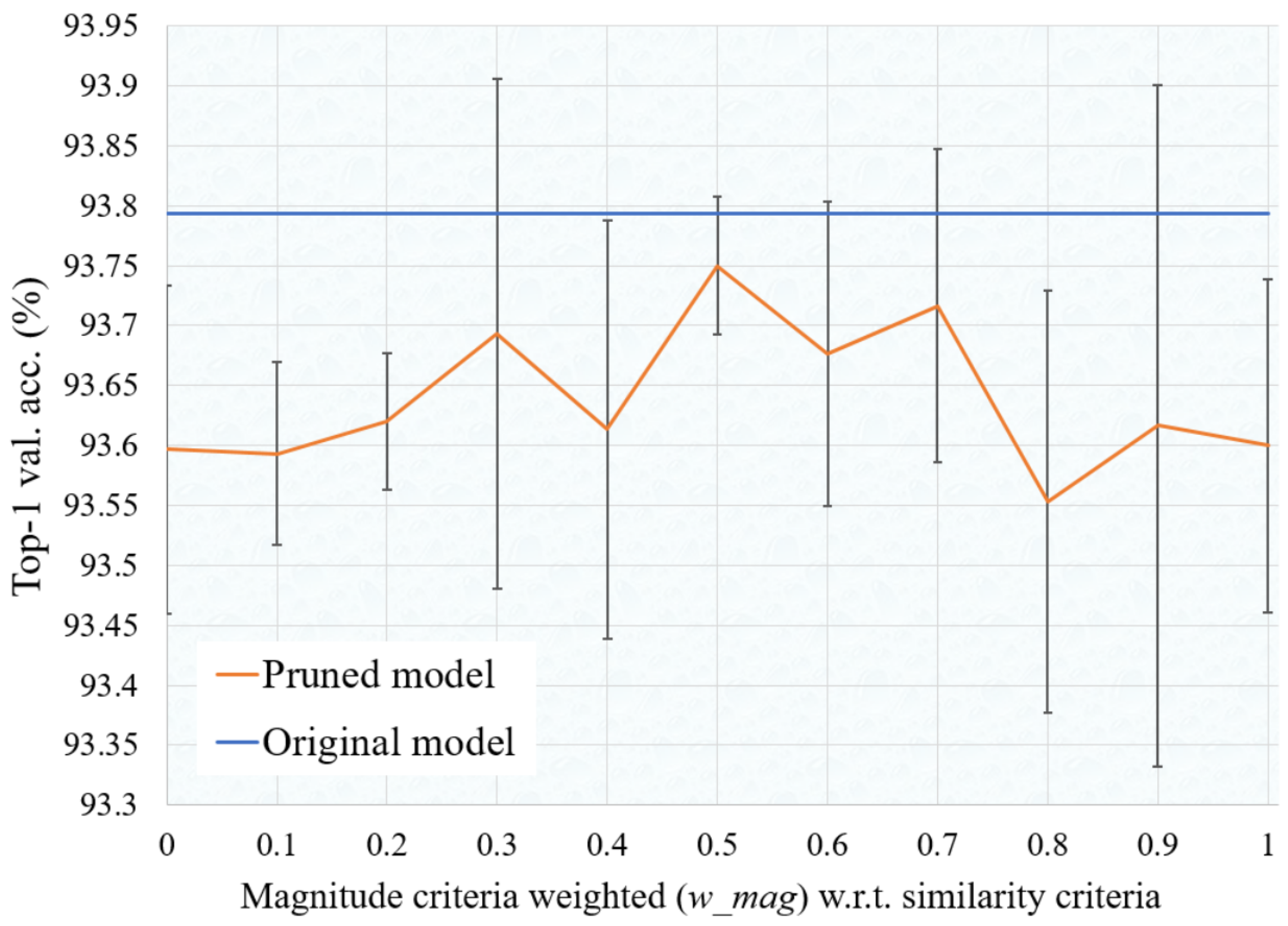

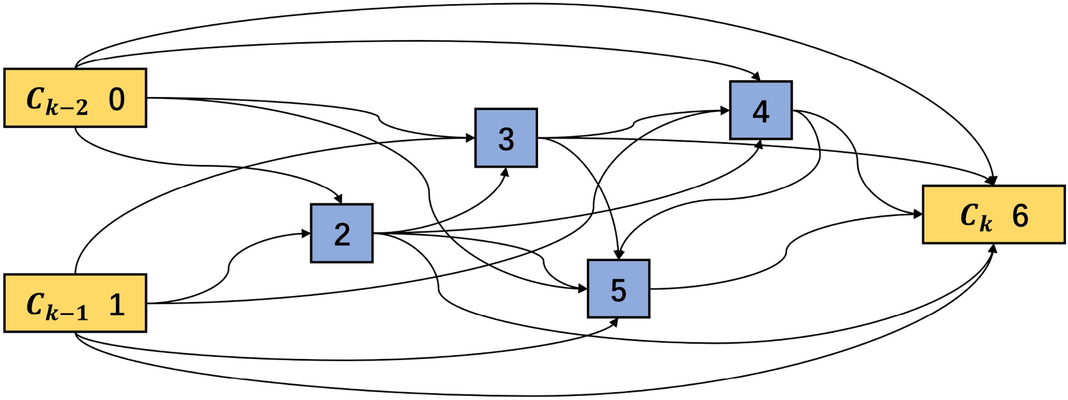

Light convolutional neural network by neural architecture search and model pruning for bearing fault diagnosis and remaining useful life prediction

How to calculate the amount of memory needed for a deep network - Quora